www.tianyancha.com

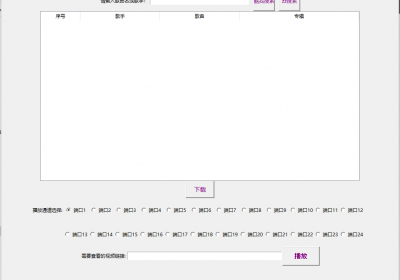

由于天眼查的公司信息需要先登陆,需要注册了帐号有了cookie才能爬取到,所以需要先自行注册登陆

之后放出代码,相对比较简单。爬取出来的信息自动新建txt文档进行储存。

如果有兴趣,可以分类储存在excel表内,因为本章是讲爬虫的,就不研究了。

import requests

from bs4 import BeautifulSoup

from urllib.parse import quote

key = '小米'

#quote()是把汉字进行转码的一种方法

url = 'https://www.tianyancha.com/search?key='+quote(key)

headers = {

'cookie': 'TYCID=fc6ce470e57211ec81057774bab03635; sensorsdata2015jssdkcross=%7B%22distinct_id%22%3A%22269516040%22%2C%22first_id%22%3A%221813823dec76a8-0cee4d1db5698f8-3c39471f-2073600-1813823dec8d03%22%2C%22props%22%3A%7B%22%24latest_traffic_source_type%22%3A%22%E7%9B%B4%E6%8E%A5%E6%B5%81%E9%87%8F%22%2C%22%24latest_search_keyword%22%3A%22%E6%9C%AA%E5%8F%96%E5%88%B0%E5%80%BC_%E7%9B%B4%E6%8E%A5%E6%89%93%E5%BC%80%22%2C%22%24latest_referrer%22%3A%22%22%7D%2C%22identities%22%3A%22eyIkaWRlbnRpdHlfbG9naW5faWQiOiIyNjk1MTYwNDAiLCIkaWRlbnRpdHlfY29va2llX2lkIjoiMTgxMzgyM2RlYzc2YTgtMGNlZTRkMWRiNTY5OGY4LTNjMzk0NzFmLTIwNzM2MDAtMTgxMzgyM2RlYzhkMDMifQ%3D%3D%22%2C%22history_login_id%22%3A%7B%22name%22%3A%22%24identity_login_id%22%2C%22value%22%3A%22269516040%22%7D%2C%22%24device_id%22%3A%221813823dec76a8-0cee4d1db5698f8-3c39471f-2073600-1813823dec8d03%22%7D; tyc-user-info=%7B%22state%22%3A%220%22%2C%22vipManager%22%3A%220%22%2C%22mobile%22%3A%2217675498658%22%7D; tyc-user-info-save-time=1654504310192; auth_token=eyJhbGciOiJIUzUxMiJ9.eyJzdWIiOiIxNzY3NTQ5ODY1OCIsImlhdCI6MTY1NDUwNDMwNywiZXhwIjoxNjU3MDk2MzA3fQ.oJwwlvu1R4fcbn1HdUdppTysa2QfmAsySJ1VTw0cCo722Zojg-0K9w9uUzyBldvtmgP2mDn2QN6EZwMpq1SHMA; aliyungf_tc=a97c9599592c9f8638dee5c163c553efe050fb8f6e3a5853034fc189262197b2; acw_tc=b65cfd7016549183637863954e261fbbafb4515898fbbfc9d80b75031f5ac3; csrfToken=zGcTS8niI-wlf74tDKh_scdI; bannerFlag=true',

'referer': 'https://www.tianyancha.com/',

'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:101.0) Gecko/20100101 Firefox/101.0'

}

html = requests.get(url,headers=headers)

soup=BeautifulSoup(html.text,'lxml')

#soup.select_one指获取第一个结果,一般爬虫抓取的时候,第一个是匹配度最高的

innerUrl=soup.select_one('a.index_alink__zcia5.link-click ')['href']

#获取到了匹配度最高的这个链接之后,我们需要的是里面的资料,所以重新get这个新的url

innerhtml = requests.get(innerUrl,headers=headers)

soup = BeautifulSoup(innerhtml.text,'lxml')

result = soup.select('.table.-striped-col.-breakall tr td')

for r in result:

print(r.text)

#到了这一步,已经获取到了小米的第一个公司的信息,接下来我们用txt文档进行储存,设置好文档格式

with open('xiaomi.txt','a',encoding='utf-8') as f:

f.write(r.text) 捡肥皂网

捡肥皂网